Create a private GCP Kubernetes cluster using Terraform

In this article, I want to share how I approached creating a private Kubernetes (GKE) cluster in Google Cloud Platform (GCP).

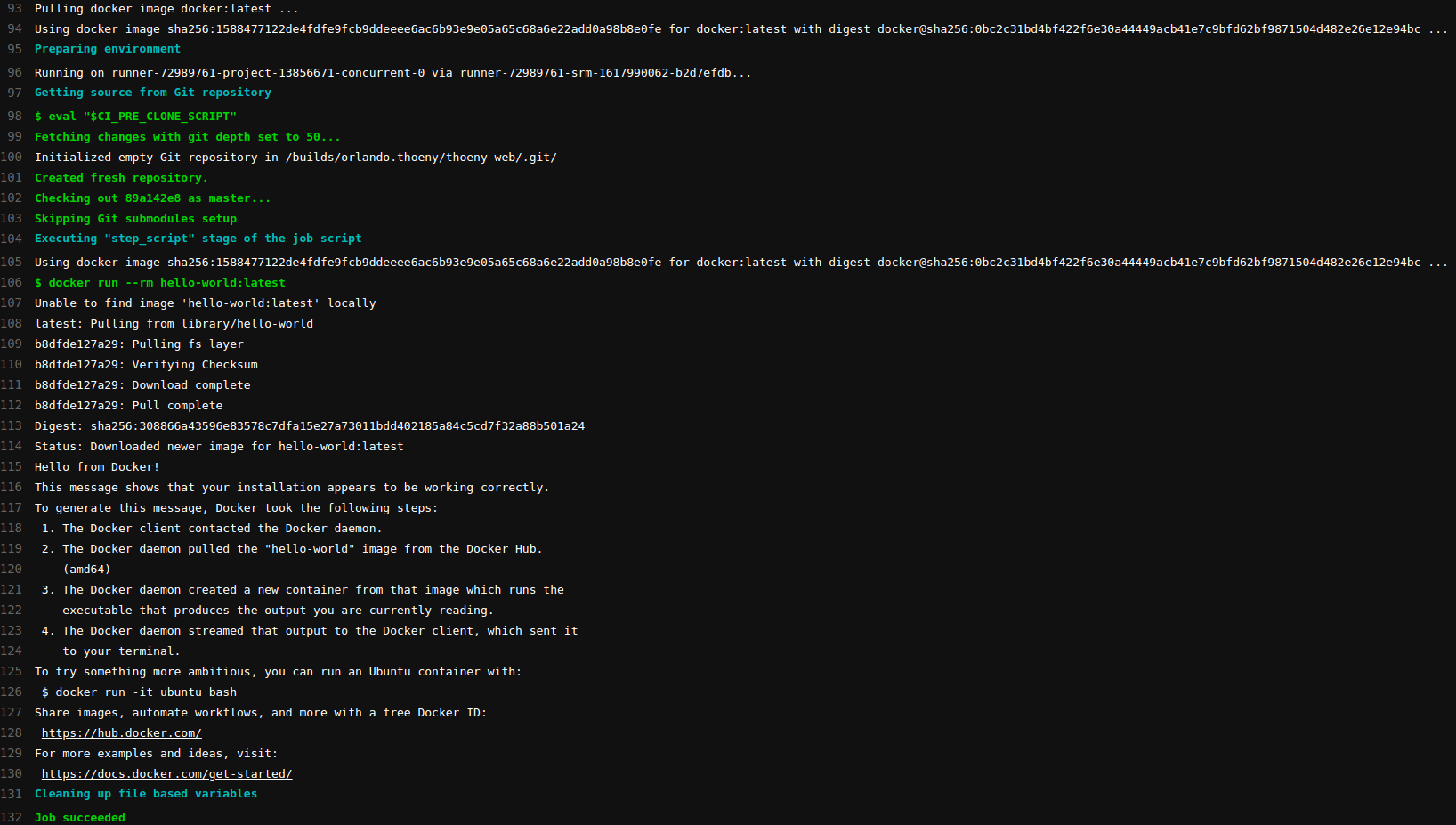

Target infrastructure

To get an overview - this is the target infrastructure we’re aiming for:

A GKE cluster with Linux Worker Nodes.

Load Balancer that routes external traffic to the Worker Nodes.

A NAT router that allows all our instances inside the VPC to access the internet.

A Bastion Instance that allows us to access the Kubernetes Control Plane to run

kubectlCLI commands. This is basically just a Linux machine with a proxy installed on it. That is exposed to the internet via an external IP address.

](https://cdn-images-1.medium.com/max/2476/1*xsexfkBNzwxKhRIhZbJs8A.jpeg) CGP infrastructure with a private GKE cluster, created with https://draw.io

CGP infrastructure with a private GKE cluster, created with https://draw.io

Introduction to Terraform

To get started with Terraform, I found the HashiCorp tutorials useful:

The Terraform Language Documentation (Reference)

There’s also lots of other resources available.

Local setup

Google Cloud Platform access

To be able to create resources in the Google cloud, a Google account is needed first. GCP offers a $300 credit with a trial period of a month (at time of writing). You can sign up for this at https://cloud.google.com/.

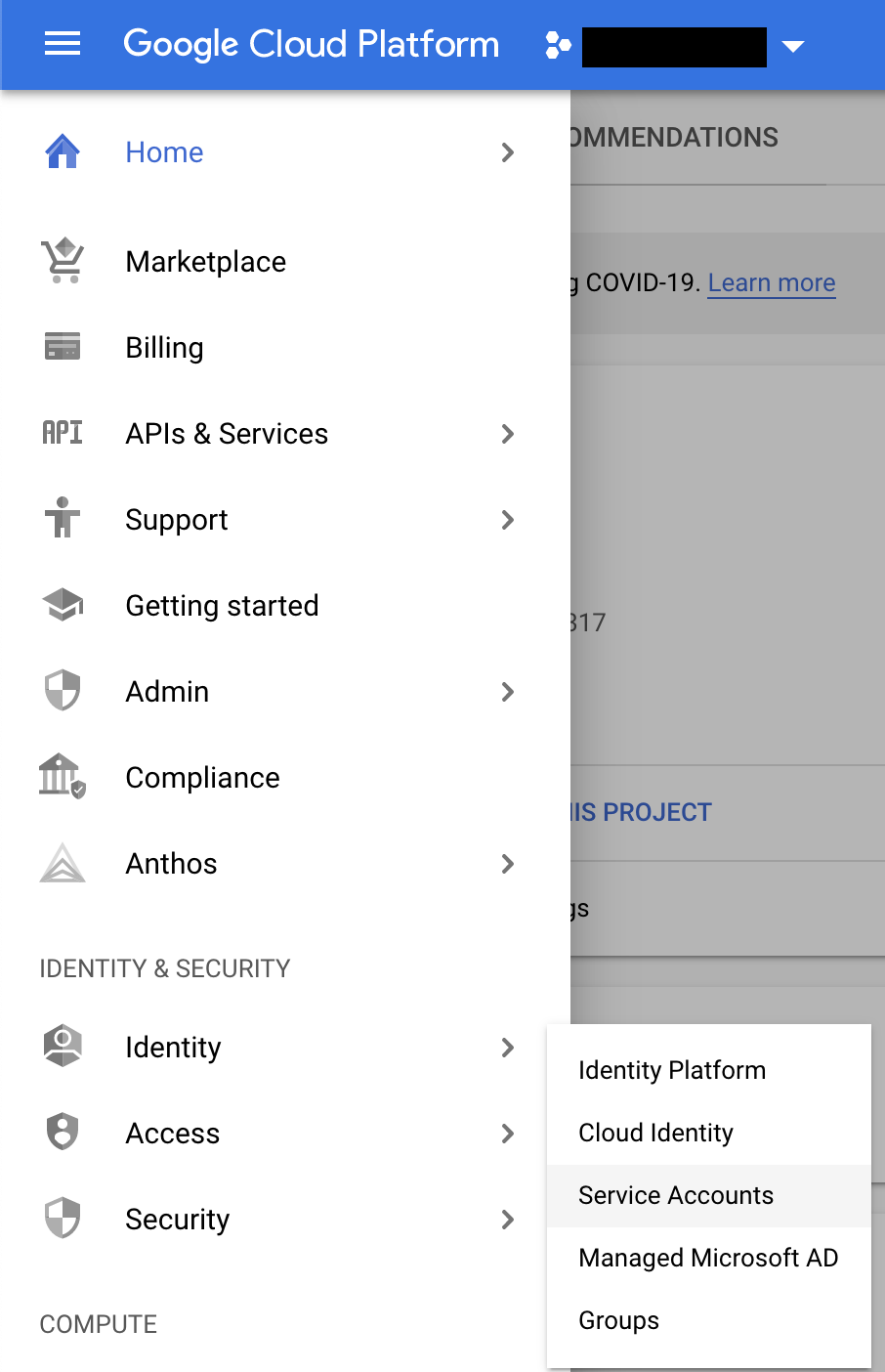

GCP Service account (or gcloud CLI as alternative)

We need to authenticate with GCP. Go to https://console.cloud.google.com/identity/serviceaccounts and create a service account. This will grant access to the GCP APIs.

After creating the service account. Create a JSON key for it and download it locally.

Install Cloud SDK & Terraform CLI

To be able to run Terraform locally. The GCP & Terraform CLI needs to be installed.

Create infrastructure with Terraform

You can find all the files on GitHub.

Setup repository

Clone the Git repository

git clone [git@github.com](mailto:git@github.com):orlandothoeny/terraform-gcp-gke-infrastructure.git

Move the service account JSON key to infrastructure/service-account-credentials.json

Configure variables:

cp ./infrastructure/terraform.tfvars.example ./infrastructure/terraform.tfvars

Change the project_id & service_account values to the GCP project & service account mail address.

Alternative to using Service Account key

Alternatively to the Service Account. You can use the gcloud CLI tool. You can find the installation instructions for it here.

Then you can log in using gcloud auth login .

After that. Store the OAuth access token that Terraform uses in the required environment variable:

export GOOGLE_OAUTH_ACCESS_TOKEN=$(gcloud auth print-access-token)

Keep in mind that this token is only valid for 1 hour (default).

Now let’s get to the actual Terraform code:

Network

infrastructure/main.tf

terraform {

required_providers {

google = {

source = "hashicorp/google"

version = "3.51.0"

}

}

}

provider "google" {

// Only needed if you use a service account key

credentials = file(var.credentials_file_path)

project = var.project_id

region = var.region

zone = var.main_zone

}

module "google_networks" {

source = "./networks"

project_id = var.project_id

region = var.region

}

...

The terraform and provider blocks are needed to configure the GCP Terraform provider.

The google_network module is a local module located inside the ./networks directory. This module defines the network resources we need:

infrastructure/networks/main.tf

locals {

network_name = "kubernetes-cluster"

subnet_name = "${google_compute_network.vpc.name}--subnet"

cluster_master_ip_cidr_range = "10.100.100.0/28"

cluster_pods_ip_cidr_range = "10.101.0.0/16"

cluster_services_ip_cidr_range = "10.102.0.0/16"

}

resource "google_compute_network" "vpc" {

name = local.network_name

auto_create_subnetworks = false

routing_mode = "GLOBAL"

delete_default_routes_on_create = true

}

resource "google_compute_subnetwork" "subnet" {

name = local.subnet_name

ip_cidr_range = "10.10.0.0/16"

region = var.region

network = google_compute_network.vpc.name

private_ip_google_access = true

}

resource "google_compute_route" "egress_internet" {

name = "egress-internet"

dest_range = "0.0.0.0/0"

network = google_compute_network.vpc.name

next_hop_gateway = "default-internet-gateway"

}

resource "google_compute_router" "router" {

name = "${local.network_name}-router"

region = google_compute_subnetwork.subnet.region

network = google_compute_network.vpc.name

}

resource "google_compute_router_nat" "nat_router" {

name = "${google_compute_subnetwork.subnet.name}-nat-router"

router = google_compute_router.router.name

region = google_compute_router.router.region

nat_ip_allocate_option = "AUTO_ONLY"

source_subnetwork_ip_ranges_to_nat = "LIST_OF_SUBNETWORKS"

subnetwork {

name = google_compute_subnetwork.subnet.name

source_ip_ranges_to_nat = ["ALL_IP_RANGES"]

}

log_config {

enable = true

filter = "ERRORS_ONLY"

}

}

This creates a VPC and subnet. And the NAT router that allows the instances inside our VPC to communicate with the internet.

Note: The IP ranges defined in the locals block that will be used for the GKE cluster may not overlap with the subnet’s CIDR range.

I defined these in the networking module to have all the networking related things in one place. They’ll be exposed as output variables and used by the kubernetes_cluster module.

Bastion instance

This module creates a virtual machine that runs a proxy. This will allow us to access the Kubernetes Control Plane from outside the GCP network, and run kubectl commands.

infrastructure/main.tf

...

module "bastion" {

source = "./bastion"

project_id = var.project_id

region = var.region

zone = var.main_zone

bastion_name = "app-cluster"

network_name = module.google_networks.network.name

subnet_name = module.google_networks.subnet.name

}

...

infrastructure/bastion/main.tf

locals {

hostname = format("%s-bastion", var.bastion_name)

}

// Dedicated service account for the Bastion instance.

resource "google_service_account" "bastion" {

account_id = format("%s-bastion-sa", var.bastion_name)

display_name = "GKE Bastion Service Account"

}

// Allow access to the Bastion Host via SSH.

resource "google_compute_firewall" "bastion-ssh" {

name = format("%s-bastion-ssh", var.bastion_name)

network = var.network_name

direction = "INGRESS"

project = var.project_id

source_ranges = ["0.0.0.0/0"] // TODO: Restrict further.

allow {

protocol = "tcp"

ports = ["22"]

}

target_tags = ["bastion"]

}

// The user-data script on Bastion instance provisioning.

data "template_file" "startup_script" {

template = <<-EOF

sudo apt-get update -y

sudo apt-get install -y tinyproxy

EOF

}

// The Bastion host.

resource "google_compute_instance" "bastion" {

name = local.hostname

machine_type = "e2-micro"

zone = var.zone

project = var.project_id

tags = ["bastion"]

boot_disk {

initialize_params {

image = "debian-cloud/debian-10"

}

}

shielded_instance_config {

enable_secure_boot = true

enable_vtpm = true

enable_integrity_monitoring = true

}

// Install tinyproxy on startup.

metadata_startup_script = data.template_file.startup_script.rendered

network_interface {

subnetwork = var.subnet_name

access_config {

// Not setting "nat_ip", use an ephemeral external IP.

network_tier = "STANDARD"

}

}

// Allow the instance to be stopped by Terraform when updating configuration.

allow_stopping_for_update = true

service_account {

email = google_service_account.bastion.email

scopes = ["cloud-platform"]

}

/* local-exec providers may run before the host has fully initialized.

However, they are run sequentially in the order they were defined.

This provider is used to block the subsequent providers until the instance is available. */

provisioner "local-exec" {

command = <<EOF

READY=""

for i in $(seq 1 20); do

if gcloud compute ssh ${local.hostname} --project ${var.project_id} --zone ${var.region}-a --command uptime; then

READY="yes"

break;

fi

echo "Waiting for ${local.hostname} to initialize..."

sleep 10;

done

if [[ -z $READY ]]; then

echo "${local.hostname} failed to start in time."

echo "Please verify that the instance starts and then re-run `terraform apply`"

exit 1

fi

EOF

}

scheduling {

preemptible = true

automatic_restart = false

}

}

This will also output two commands.

The content of the ssh output can be used to open an SSH tunnel to the Bastion instance.

kubectl_command can be used to run kubectl and use the Bastion instance as proxy.

infrastructure/bastion/outputs.tf

output "ip" {

value = google_compute_instance.bastion.network_interface.0.network_ip

description = "The IP address of the Bastion instance."

}

output "ssh" {

description = "GCloud ssh command to connect to the Bastion instance."

value = "gcloud compute ssh ${google_compute_instance.bastion.name} --project ${var.project_id} --zone ${google_compute_instance.bastion.zone} -- -L8888:127.0.0.1:8888"

}

output "kubectl_command" {

description = "kubectl command using the local proxy once the Bastion ssh command is running."

value = "HTTPS_PROXY=localhost:8888 kubectl"

}

Kubernetes Cluster

Creates a GKE Kubernetes cluster and Linux nodes inside the previously created network.

infrastructure/main.tf

...

module "google_kubernetes_cluster" {

source = "./kubernetes_cluster"

project_id = var.project_id

region = var.region

node_zones = var.cluster_node_zones

service_account = var.service_account

network_name = module.google_networks.network.name

subnet_name = module.google_networks.subnet.name

master_ipv4_cidr_block = module.google_networks.cluster_master_ip_cidr_range

pods_ipv4_cidr_block = module.google_networks.cluster_pods_ip_cidr_range

services_ipv4_cidr_block = module.google_networks.cluster_services_ip_cidr_range

authorized_ipv4_cidr_block = "${module.bastion.ip}/32"

}

...

infrastructure/kubernetes_cluster/main.tf

resource "google_container_cluster" "app_cluster" {

name = "app-cluster"

location = var.region

# We can't create a cluster with no node pool defined, but we want to only use

# separately managed node pools. So we create the smallest possible default

# node pool and immediately delete it.

remove_default_node_pool = true

initial_node_count = 1

ip_allocation_policy {

cluster_ipv4_cidr_block = var.pods_ipv4_cidr_block

services_ipv4_cidr_block = var.services_ipv4_cidr_block

}

network = var.network_name

subnetwork = var.subnet_name

logging_service = "logging.googleapis.com/kubernetes"

monitoring_service = "monitoring.googleapis.com/kubernetes"

maintenance_policy {

daily_maintenance_window {

start_time = "02:00"

}

}

master_auth {

username = "my-user"

password = "useYourOwnPassword."

client_certificate_config {

issue_client_certificate = false

}

}

dynamic "master_authorized_networks_config" {

for_each = var.authorized_ipv4_cidr_block != null ? [var.authorized_ipv4_cidr_block] : []

content {

cidr_blocks {

cidr_block = master_authorized_networks_config.value

display_name = "External Control Plane access"

}

}

}

private_cluster_config {

enable_private_endpoint = true

enable_private_nodes = true

master_ipv4_cidr_block = var.master_ipv4_cidr_block

}

release_channel {

channel = "STABLE"

}

addons_config {

// Enable network policy (Calico)

network_policy_config {

disabled = false

}

}

/* Enable network policy configurations (like Calico).

For some reason this has to be in here twice. */

network_policy {

enabled = "true"

}

workload_identity_config {

identity_namespace = format("%s.svc.id.goog", var.project_id)

}

}

resource "google_container_node_pool" "app_cluster_linux_node_pool" {

name = "${google_container_cluster.app_cluster.name}--linux-node-pool"

location = google_container_cluster.app_cluster.location

node_locations = var.node_zones

cluster = google_container_cluster.app_cluster.name

node_count = 1

autoscaling {

max_node_count = 1

min_node_count = 1

}

max_pods_per_node = 100

management {

auto_repair = true

auto_upgrade = true

}

node_config {

preemptible = true

disk_size_gb = 10

service_account = var.service_account

oauth_scopes = [

"https://www.googleapis.com/auth/devstorage.read_only",

"https://www.googleapis.com/auth/logging.write",

"https://www.googleapis.com/auth/monitoring",

"https://www.googleapis.com/auth/servicecontrol",

"https://www.googleapis.com/auth/service.management.readonly",

"https://www.googleapis.com/auth/trace.append",

]

labels = {

cluster = google_container_cluster.app_cluster.name

}

shielded_instance_config {

enable_secure_boot = true

}

// Enable workload identity on this node pool.

workload_metadata_config {

node_metadata = "GKE_METADATA_SERVER"

}

metadata = {

// Set metadata on the VM to supply more entropy.

google-compute-enable-virtio-rng = "true"

// Explicitly remove GCE legacy metadata API endpoint.

disable-legacy-endpoints = "true"

}

}

upgrade_settings {

max_surge = 1

max_unavailable = 1

}

}

Creating the infrastructure

This will initialize Terraform.

cd infrastructure

terraform init

Next- create the infrastructure using the Terraform configuration.

terraform apply

The command will list all the GCP components Terraform will create. Accept by typing yes in the terminal. This will create all the infrastructure inside GCP, and take a few minutes.

Additional resources

Some resources that were useful to me: